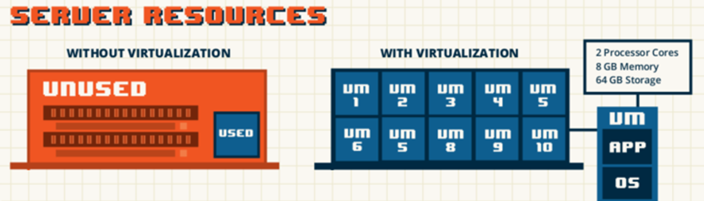

Virtualization

- Virtual machine: a virtual machine (VM) is an emulation of particular computer system, its resources such as CPU, RAM and DISK space.

- Hypervisor: aka Virtual Machine Monitor (VMM) is a piece of computer software or hardware that creates and runs virtual machines. Popular hypervisors: VMWare (ESXi), AWS XEN, Microsoft Hyper V

Similarly in IT, using virtualization, it will be more efficient to divide a computer’s resources–like processors, memory and storage… then assign these resources to virtual machines and each one is capable of hosting its own operating system and application.

Containerization

- Container: a container wraps a piece of software in a complete file system that contains everything need to run: code, system tools, system libraries–anything that can be installed on a server.

- Docker container: an open-source development platform. Its main benefit is to package applications in “containers,” allowing them to be portable among any system.

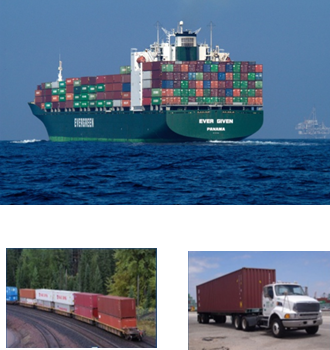

In 1955, Malcolm McLean, a trucking entrepreneur from North Carolina, USA, bought a steamship company with the idea of transporting entire truck trailers with their cargo still inside. That is the birth of shipping containers.

Benefits:

- Standardization on shape, size, volume and weight.

- Massive economies of scale. Reduction in shipping costs.

- Seamless movement across sea, rail and road.

So by applying a similar concept, Containers like Docker is a solution that lets software run reliably when moved from one computing environment to another. This means that the application will run in the developer’s laptop then to a test environment then finally to a production environment.

Docker container benefits:

- Cost savings: The nature of Docker is that fewer resources are necessary to run the same application. Organizations are able to save on everything from server costs to the employees needed to maintain them.

- Portability: Applications that are running on Docker can run on developer’s laptop to On-Premise infrastructure or to the Cloud (like Amazon’s, Google’s or Microsoft Azure) or to PAAS like OpenShift by Red Hat or Bluemix by IBM.

- Rapid Deployment: With a smaller footprint and simple deployment commands, Docker manages to reduce deployment to seconds.

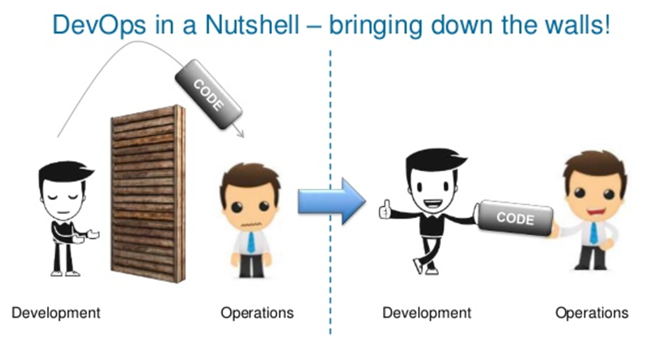

DevOps

- DevOps: A combination of practices and tools that increases an organization’s ability to deliver applications at high velocity. This speed enables organizations to better serve their customers and compete more effectively in the market.

- Continuous Integration: A practice that requires developers to integrate code in a code repository (e.g. Github, SVN) several times a day. Each check-in (commit) is then verified by an automated build and testing, allowing teams to detect problems early.

- Continuous Deployment: A strategy for software releases wherein any commits that passes the automated testing phase is automatically released into the production.

Read more about virtualization, containerization and DevOps.

Follow us on social